[For my contribution to Accretionary Wedge #3, which will hopefully get in under the wire, I’ve decided to go for a grand and sweeping look at the interaction of geology and life.]

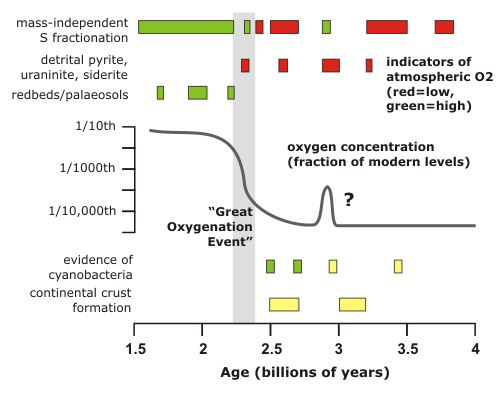

The 21% of our atmosphere that is taken up by oxygen represents one of the most profound changes that life has wrought on this planet. The very reactivity which drives the metabolism of most multicellular life means that if oxygen were not being actively accumulated by photosynthesis, it would vanish within a few thousand years – a geological eyeblink. It follows that the atmosphere of the very early earth, prior to the origin of life and particularly oxygenic photosynthesis, would have been very different from what we see today: the best guess that it was highly reducing, with high concentrations of carbon dioxide and probably methane. Finding out when, and how, the atmosphere become oxygenated is not simple – we have no samples of the ancient atmosphere to directly consult – but the figure below summarises several lines of evidence that combine to indicate that after a small and short lived possible oxygen spike in the late Archean, permanent oxygenation of the Earth’s atmosphere occurred over a fairly short interval at the beginning of the Proterozoic, about 2.5 billion years ago. The main action in this ‘Great Oxygenation Event’ seems to have occurred between 2.41 and 2.32 billion years ago – so ‘short’ in this case means ‘100 million years or so’.

Most of the evidence regarding the chemical state of the early atmosphere depends on the fact that the composition of the air has a large effect on which minerals are stable at the Earth’s surface, and which minerals will be broken down by weathering. In todays’s oxidising atmosphere, when minerals like pyrite (an iron sulphide) and uraninite (uranium oxide) and siderite (iron carbonate) are exposed by erosion, they are quickly broken down. When you look at very old sedimentary sequences in Australia, South Africa and Canada, however, you sometimes find rounded clasts of pyrite and uraninite that have clearly been eroded and transported some distance by rivers without being altered. This can only happen in low-oxygen conditions (less than 1/1000th of the present atmospheric concentration of O2). In rocks younger than about 2.3 billion years, these detrital pyrites and uraninites are no longer found, but you do start finding something that was previously missing: iron-rich redbeds (sandstones in which the grains are coated by haematite), and palaeosols (preserved weathering horizons also known as laterites). These are indicators of an oxidising environment: reduced iron minerals are usually soluble and can be leached out of sediments, whereas oxidised iron minerals are usually insoluble and will stay put. Therefore, by the time that redbeds first appear in the geological record at 2.2 billion years, oxygen concentrations in the atmosphere must have increased by several orders of magnitude.

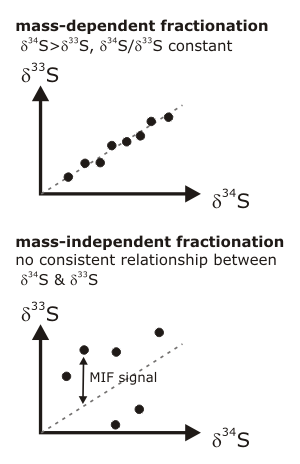

More details on this story are provided by a much more sensitive indicator of past atmospheric oxygen concentrations: the mass-independent fractionation (MIF) of sulphur isotopes. In most chemical reactions, lighter isotopes of a particular element need slightly less energy to react than heavier ones, so they are preferentially incorporated into the end product. The degree of enrichment, or fractionation, depends on the atomic mass difference between the isotopes. In the case of sulphur, 32S is fractionated with respect to both 33S and 34S. The 34S fractionation is always greater than the 33S fractionation, and always by the same proportion – in other words, if you measure the fractionation of sulphur-34 you can predict the fractionation of sulphur-33, and vice versa. However, there are some chemical reactions which don’t follow these rules – the atomic weight does not seem to matter, so the fractionation of the different isotopes occurs at random and the proportional relationship between &delta34S and &delta33S breaks down. This is known as mass independent fractionation.

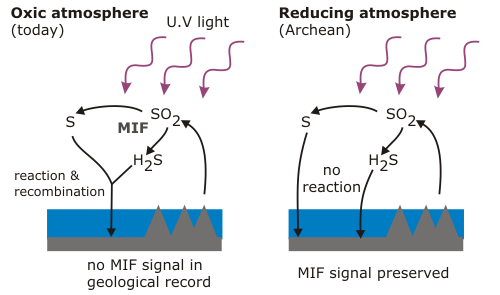

MIF of sulphur isotopes is only known to occur in the upper atmosphere, where sulphur dioxide and sulphur monoxide are broken apart by UV light. In the present atmosphere, the products of these reactions are quickly reoxidised, leaving no trace to be recorded in the minerals forming at the Earth’s surface. In a reducing atmosphere, however, this will not occur; the fractionated products of UV dissociation can remain isolated, find their way to the surface and be preserved.

Even very small amounts of oxygen – less than a hundred-thousandth of present-day concentrations – would disrupt this signal, so the large MIF signals revealed by sulphur isotope measurements in Archean rocks (red boxes in the first figure) indicate effectively zero atmospheric oxygen before 2.4 billion years. There is one exception: sulphides in 2.9 billion year old rocks from eastern South Africa – in fact, the upper part of the sequence I’m currently studying – show virtually no signs of a MIF signal (green box). This is the only direct evidence for the small, early oxygen peak shown in my first figure, and the fact that you can still find detrital uraninite in nearby rocks of the same age shows that even if there was oxygen in the air, it was only a tiny whiff. The next time the MIF signal vanished from the geological record was at 2.3 billion years, and following the ‘Great Oxygenation Event’ it vanished for good.

So what was the cause of this rapid change in atmospheric chemistry? The one thing that we know it is not is the evolution of photosynthetic cyanobacteria: there is direct molecular evidence of their presence in rocks as old as 2.7 billion years, and, if you believe in stromatolites, you can push back their first emergence to almost 3.5 billion. There is also that first minor peak to consider, Lowe and Tice (2007)* think that this might be an important clue. They note that the minor oxygenation event at 2.9 billion years and the much more substantial rise in O2 levels at 2.4 billion both followed periods of rapid continental crust formation. The emergence of significant landmasses above sea-level would almost certainly have had an effect on the atmosphere, because the weathering of silicates draws down CO2, and they propose that the cooling due to weathering of the first continents allowed cyanobacteria, which (if they were around at all) were until then restricted to marginal environments by extremophiles better adapted to the warmer conditions of the Archean Earth, to start taking over. Increased photosynthetic oxygen production would then have allowed it to start accumulating in the atmosphere, quickly oxidising and removing any methane, further cooling the planet to the cyanobacteria’s advantage, and further boosting oxygen production. Despite this possible feedback mechanism, the 2.9 billion year event seems to have petered out; possibly the limited amount of emergent continental crust in this era* was too quickly eroded down to sea-level, allowing volcanic CO2 to build up again and making things uncomfortably warm for the cyanobacteria once more. It was only when much more extensive continents formed between 2.7 and 2.5 billion years that the process could begin again, and was able to sustain itself, and set the stage for life as we know it to eventually emerge.

*anyone reading the references below will find that several other ideas have been put forward about why oxygenation of the atmosphere lagged so far behind the evolution of cyanobacteria, but this one ties a lot of things together quite nicely.

**the inference that there was very little continental crust in the Archean assumes that what we still find on the Earth’s surface (South Africa, Western Australia) was pretty much it. It’s still not entirely clear whether that was the case.

Holland, HD (2006). Phil. Trans. R. Soc. B. 361, p903-915 [doi].

Kasting, JF and Ono, S (2006). Phil. Trans. R. Soc. B. 361, p917-929 [doi].

Lowe, DR and Tice, MM (2007). Precamb. Res. 158, p177-197 [doi].

Ono, S et al. (2006). S. African J. Geol. 109, p97-108 [doi].

Comments (7)